Today Cisco announced their entry in the Hyperconverged Infrastructure marketplace, a solution called HyperFlex.

Today Cisco announced their entry in the Hyperconverged Infrastructure marketplace, a solution called HyperFlex.

Hyperconverged Infrastructure (HCI) can be thought of as “Virutalization in a Box”. An HCI solution is one that offers everything needed for server virtualization: compute, memory, network, storage, hypervisor, and management/orchestration for all of these.

Cisco’s HyperFlex solution uses:

- Cisco Unified Computing System (UCS) to provide the compute, memory, and storage hardware

- Cisco UCS Fabric Interconnects to provide the network hardware

- Cisco UCS Manager for compute, memory, and network management

- VMware ESXi for the hypervisor

- SpringPath software, re-branded as the Cisco HX Data Platform, to provide the data services, and storage optimization and orchestration

The HyperFlex solution will initially be offered in three different ways: small footprint, capacity-heavy, and compute-heavy.

I’ll walk through the various components and the complete solution below.

The Pieces

Let’s take a brief look at some of the different parts, before looking at the overall solution.

Cisco Unified Computing System (UCS)

I remember when Cisco first offered the UCS product. It seemed to confuse people — here was a network company, offering a blade server. It wasn’t as confusing as if a car manufacturer was suddenly offering a soft drink, but customers were still hesitant at first.

It didn’t take long for everyone to realize that pretty much no one uses a server without connecting it to a network and if you’re going to have built-in networking you’d be hard-pressed to do better than getting it from Cisco.

With UCS you can get a large number of cores, a lot of RAM, and a mess of network bandwidth in a relatively small form factor. All this makes UCS is a great platform for virtualization. I have a number of customers using it for exactly that.

Cisco UCS Fabric Interconnects

The Fabric Interconnects are the key to UCS scalability. The Fabric Interconnect puts all UCS blades and chassis that are connected to it into a single, highly-available management domain. Cisco’s Fabric Extender (FEX) technology allows up to 20 individual UCS chassis to be combined into one managed system. Cisco also offers a Virtual Machine Fabric Extender (VM-FEX) which collapses virtual switches with physical switches which can simplify network management in a virtualized environment.

Cisco UCS Manager

Cisco’s UCS Manager software allows the management of multiple UCS blades and chassis through a single interface. It has impressive auto-discovery capabilities, detecting added and updated hardware immediately, and updating its inventories accordingly.

VMware ESXi Hypervisor

What’s there to say here that I haven’t already said in the past? It’s obvious I’m a big fan of VMware. It’s the most mature and feature-rich platform for server virtualization out there.

SpringPath

![]() Here’s where things start to get really interesting.

Here’s where things start to get really interesting.

For those you aren’t familiar with them, SpringPath is a software startup company. Cisco is actually one of the companies who invested in SpringPath.

SpringPath describes themselves as a hyperconvergence software company. (They used to describe themselves as a hyperconverged infrastructure company. I’m glad they changed their messaging. To me, you’re not actually offering HCI unless your solution includes everything you need, and SpringPath still requires hardware to run on. (Software is funny like that.) )

I had the opportunity to visit SpringPath HQ right after they came out of stealth mode and get a pretty thorough deep-dive into what they offer a year ago during Storage Field Day 7. You can see recordings from that session here.

The short version is that the SpringPath Data Platform ties together all of the storage installed in the physical hosts in a cluster into a single pool of storage and then serves that storage to the hypervisor and virtual machines on the cluster. In that sense, it’s similar in concept to VMware’s Virtual SAN (VSAN) offering.

However, the power of the SpringPath Data Platform is in its flexibility. SpringPath’s storage management is built upon their HALO (Hardware Agnostic Log-Structured Objects) architecture. In addition to providing optimization services like automated tiering and inline data compression and deduplication, HALO allows SpringPath to separate data at rest from data access which in turn allows it options in how the storage is presented to the hypervisor — or hypervisors…

Yes, a design goal of SpringPath is to not only allow it to work with the customer’s choice of hypervisor, but to also allow a single pool of storage resources to be presented to more than one hypervisor simultaneously.

- To ESXi, SpringPath presents NFSv3 with support for VAAI and VVols

- To KVM, SpringPath presents the choice of NFS or Cinder

- To Hyper-V, SpringPath presents SMB

Additionally, SpringPath supports containers and even bare metal deployments as well.

All in all, I was fairly impressed with what I saw of SpringPath, and they’ve been working on it for a year since I had my deep-dive.

The Cisco HyperFlex Solution

As I mentioned above, HyperFlex is essentially the SpringPath Data Platform, running on Cisco UCS hardware, supporting the VMware ESXi hypervisor. Of course, as part of HyperFlex, the SpringPath portion is re-branded as the Cisco HX Data Platform, but have no doubt — it’s SpringPath under the cover.

Cisco is positioning HyperFlex as a “Software-defined everything” solution. Obviously, I buy into the idea that it’s got software-defined storage. I’m also willing to buy that the combination of the Fabric Interconnects with FEX and VM-FEX count as software-defined networking. I’m not, however, entirely sold on the idea the Cisco UCS is “software-defined compute”, a term I’d never heard before the product briefing I received.

To be fair, I’m not saying it isn’t that, I’m just not 100% sure it is. Maybe it’s me still adjusting to the newness of the term.

Management

During the briefing, Cisco pointed out that you can manage the entire HyperFlex solution through a single interface. They then went on to say that you’d use the UCS Manager to manage the hosts and Fabric Interconnects, and a plugin to vCenter to manage the storage.

(I’ve probably re-read the previous paragraph more times than you have. Yes, they said “single interface”, and then yes, they immediately followed that by describing the two interfaces you’d use to manage the solution. It confused me, too.)

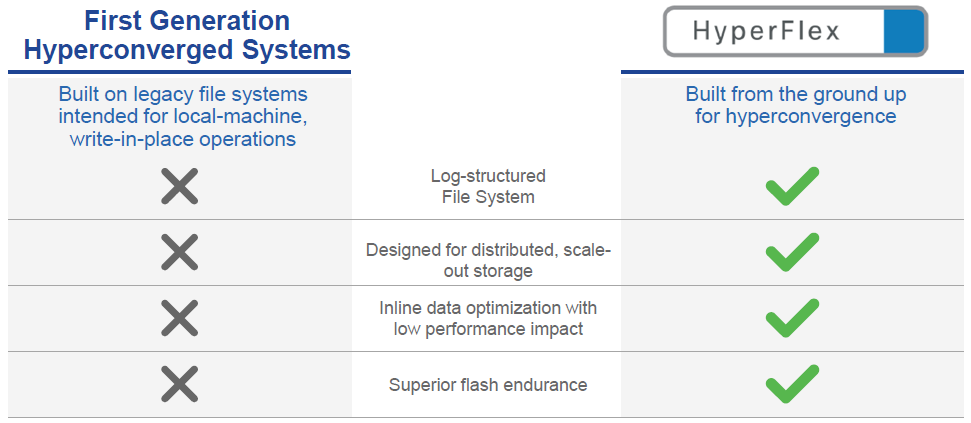

Competitive Comparisons

Cisco spent some time in the briefing comparing HyperFlex to other HCI solutions already on the market. To do so, they lumped them together, calling them “first-generation hyperconverged systems”. To illustrate their points, they provided the following chart:

I’m with them 100% on the first point. The SpringPath — excuse me — the Cisco HX Data Platform is the only HCI data layer I’m aware of that has a log-structured file system.

I’m willing to listen and learn more about the fourth point. I have no details on how they’re basing this comparison. It’s possible that there’s something in the SpringPath, er, Cisco HX Data Platform that writes to Flash in a way that supports Flash endurance better than the other HCI vendors do, I just don’t know.

It is the second and third points, however, that did cause me to question whether or not the author of the chart had any familiarity whatsoever with any other HCI product out there. Cisco gets a “no-pass” on these two points.

Snapshots and Clones

The HyperFlex solution uses native VMware snapshots for additional data protection. It uses native VMware clones (pointer-based writable snapshots) as a means for rapid provisioning of VMs.

Scalability

As with other clustered systems, there are two easy ways to scale compute, memory, and storage in a HyperFlex solution. The first way is to upgrade the nodes in the cluster, adding more compute, memory, and/or storage. The second way is to expand the cluster by adding additional nodes.

For those who wish to scale compute and memory without adding additional capacity, HyperFlex has an option there as well. Non-HyperFlex nodes can be connected to the HyperFlex cluster’s storage by use of a piece of add-on software called IOVisor. Those hosts can then be added to the vSphere cluster via vCenter, and they can start serving VMs stored on the HyperFlex cluster.

Initial Support and Roadmap

At GA the HX Data Platform will only support presenting storage as NFS, and the HyperFlex will only support the VMware ESXi hypervisor. HyperFlex support for both Hyper-V and KVM are on the roadmap.

I believe that Cisco has chosen to roll HyperFlex out in a controlled fashion and is thus limiting the initial supported functionality. Once they’ve had it installed and running in the wild for a while, I expect we’ll see additional hypervisor support.

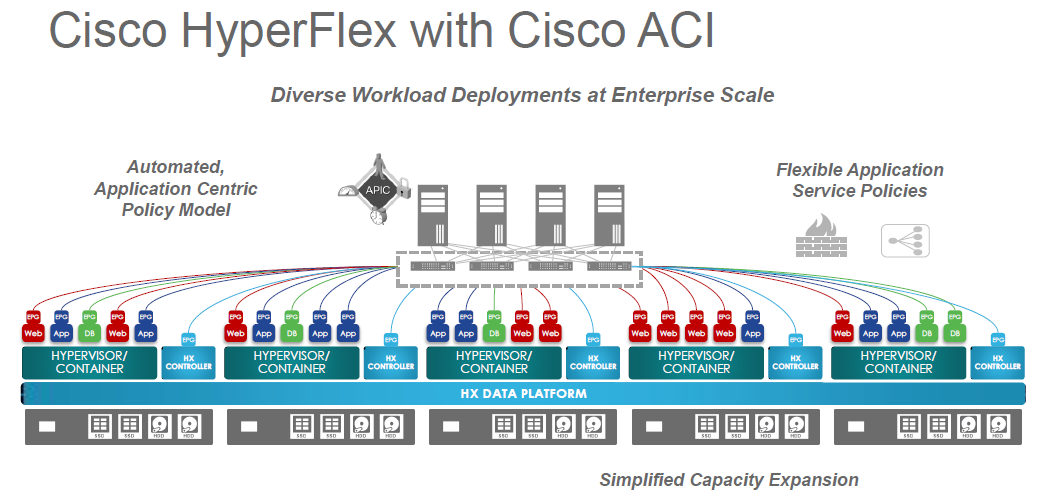

Positioning

It shouldn’t be too surprising that Cisco is positioning HyperFlex as an ideal part of a larger enterprise-scale data center solution when combined with their Application Centric Infrastructure (ACI). I’ll admit, the combination is an intriguing one.

Here’s a graphic giving a logical depiction of what that combined solution might look like.

Form Factors

The consumption model for HyperFlex is an interesting one. Customers will purchase the hardware, just like they’re used to. The HX Data Platform, however, is purchased on an annual subscription model. VMware licenses can be purchased with a HyperFlex solution or customers can provide their own.

The HyperFlex nodes are “configure to order”, giving customers options on CPU, RAM, and storage. The nodes will be factory-installed and configured, so they’ll arrive with the HX Data Platform already installed and all ESXi options already configured.

Initially, Cisco is offering three base cluster options.

The first is the HX220c nodes. This can be purchased with a minimum of three nodes and a maximum of eight. (Cisco will be raising the maximum number of nodes per cluster in future releases).

The first is the HX220c nodes. This can be purchased with a minimum of three nodes and a maximum of eight. (Cisco will be raising the maximum number of nodes per cluster in future releases).

Based on a minimally-configured three-node cluster and a maximally-configured eight-node cluster, this HyperFlex option would allow for a range of:

- 12 to 96 CPU cores

- 12GB to 2,048GB (2TB) of RAM

- 900GB to 128TB raw capacity

The second option is what Cisco refers to as the “capacity-heavy” configuration, the HX240c nodes. As with the first option, this can be purchased with a minimum of three and a maximum of eight nodes.

The second option is what Cisco refers to as the “capacity-heavy” configuration, the HX240c nodes. As with the first option, this can be purchased with a minimum of three and a maximum of eight nodes.

Again, based on a minimally-configured three-node cluster and a maximally-configured eight-node cluster, this HyperFlex option would allow for a range of:

- 12 to 96 CPU cores

- 12GB to 3,072GB (3TB) of RAM

- 900GB to 284TB raw capacity

The third option is what Cisco refers to as the “compute-heavy” configuration. This configuration shows off the flexibility of the HX Data Platform, as the cluster is a hybrid — a combination of HX240c nodes with UCS B200.

The third option is what Cisco refers to as the “compute-heavy” configuration. This configuration shows off the flexibility of the HX Data Platform, as the cluster is a hybrid — a combination of HX240c nodes with UCS B200.

The chassis shown on the right (bottom half of graphic) can support anywhere from one to eight UCS B200 blade servers.

Based on three minimally-configured HX240c nodes plus a minimally-configured B200, and three maximally-configured HX240c nodes plus a maximally-configured B200, this HyperFlex option would allow for a range of:

- 16 to 416 CPU cores

- 16GB to 8,222GB (8.2TB) of RAM

- 900GB to 144TB raw capacity

Cisco didn’t say so specifically, but I’m assuming that in the compute-heavy configuration the B200 blades are all running the IOVisor software to connect to the storage in the HX220c nodes.

Fabric Interconnects

One of the points that Cisco downplayed in the briefing was ordering fabric interconnects. As of the time of the launch, customers must purchase fabric interconnects with their HyperFlex cluster. In the future, customers who already own fabric interconnects may be able to take advantage of their existing interconnect hardware for HyperFlex.

Availability

The three base configurations described above are available to be ordered now.

You can get details on these configurations from Cisco’s new HyperFlex landing page.

GeekFluent’s Conclusions

For starters, as I mentioned, I’m a big fan of Cisco UCS, VMware, and SpringPath. I think putting the three of them together is a very powerful combination.

I also believe that the HyperFlex launch will put an end to any rumors that Cisco might be considering purchasing Nutanix. Cisco is coming out of the gate swinging on this one and intends to beat Nutanix in the Hyperconverged arena.

I think Cisco is in good shape to go after virtualization marketshare. They’ve been doing that with UCS for years now, both on their own and partnered with EMC (VCE) and NetApp (Flexpod). That said, I’m not sure if they’re in good shape to go after hyperconverged marketshare. The pure-FUD (Fear, Uncertainty, and Doubt), low-fact content of their competitive comparison concerns me. (Although, come to think of it, Nutanix has done OK for themselves with pure-FUD, low-fact marketing tactics, so we’ll just have to see how that goes.)

While I understand the impulse to roll new functionality out slowly, and even the business wisdom behind doing so, I have to admit I’m a little disappointed to not see more of what makes SpringPath so unique in the first version of the HX Data Platform. Hopefully more of that flexibility and more customer options will be coming soon.

Once Cisco rolls out more SpringPath functionality in HyperFlex — specifically support for KVM and OpenStack — I fully expect Cisco to expand their Metapod solution offerings to include HyperFlex infrastructure in addition to the orchestration and support they offer now.

Expect a lot of rumor, speculation, and outright questioning along the lines of either “when is Cisco going to acquire SpringPath” or “how come Cisco hasn’t just acquired SpringPath yet?”

Lastly, I wonder about the future of the Cisco partnership with SimpliVity. I like the SimpliVity solution, and I think OmniStack on UCS is a great option. SimpliVity offers full data optimization features, has some data protection features not present in HyperFlex, and — if I were a betting man — I’d predict that SimpliVity will beat HyperFlex to market with support for Hyper-V, if only because they’ve been working on it longer. I’d understand if Cisco withdrew from their SimpliVity partnership in favor of their own offering, but I hope they don’t. I’m always in favor of more options being available to customers.

Feedback

Disagree with any of my conclusions? Have any wild speculation of your own, either based on my conclusions or entirely your own? Add a comment below to share your thoughts.

Pingback: Cisco Enters Hyperconverged Market with HyperFlex - Tech Field Day

A well balanced presentation, enthusiastic, but not Kool-Aid swilling. I am a little concerned that only one HCI offering, SimpliVity, seems to see the value of data optimization. It is very convenient to have the storage bundled into a solution, but it is also a limiting factor when compared to external SAN or NFS. SimpliVity mitigates these issues by adding extra value to the storage that will fit and scaling the overall solution across multiple locations.

The SpringPath Data Platform provides storage optimization (data deduplication and compression).

Pingback: Cisco Launches Hyperconverged Platform & Acquires Cloud Orchestration Startup - Packet Pushers -

Hi Dave,

Nice write-up.

you mention you know of only 1 HCI data layer that has a log-structured file system. Allow me to double that amount. Open vStorage (https://www.openvstorage.org/) also uses a log structured approach. You can read more about the technical details on the blog http://blog.openvstorage.com/2014/09/location-time-based-or-magical-storage/

Kind regards,

Wim Provoost

Product Manager Open vStorage

Wim,

I have to admit I am unfamiliar with Open vStorage. Thanks for bringing it to my attention. I’ll check it out.

-Dave

Pingback: Clever Title Goes Here | GeekFluent

Pingback: Qumulo Secures Round C Prime Funding – My Conversation with Bill Richter | GeekFluent

Pingback: Cisco Announces Intention to Acquire Springpath | GeekFluent